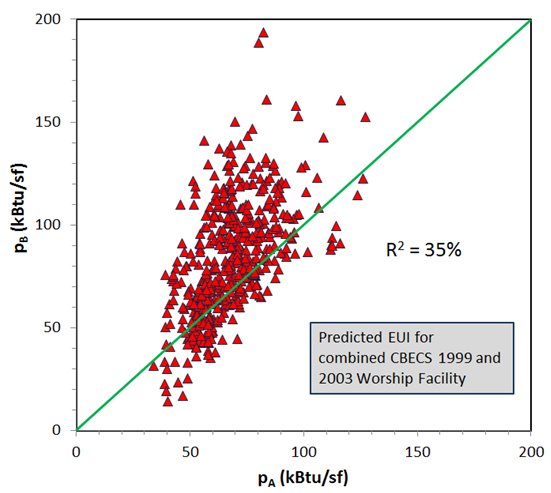

As I have written before — indeed, the subject of my recent book — my work shows that the EPA’s ENERGY STAR benchmarking scores for most building types are little more than placebos. The signature feature of the ENERGY STAR benchmarking scores is the assumption that the EPA can adjust for external factors that impact building energy use. This adjustment is based on linear regression performed on a relatively small dataset. For most building types this regression dataset was extracted from the Energy Information Administration’s 2003 Commercial Building Energy Consumption Survey (CBECS). The EPA has never demonstrated that these regressions accurately predict a component of the energy use of the larger building stock. They simply perform their regression and assume it is accurate in predicting EUI for other similar buidings.

In the last three years I have challenged this assumption by testing whether the EPA regression accurately predicts energy use for buildings in a second, equivalent dataset taken from the earlier, 1999 CBECS. In general I find these predictions to be invalid. For one type of building — Supermarkets/Grocery Stores — I find the EPA’s predictions to be no better than those of randomly generated numbers!

In May of this year the EIA released public data for its 2012 Commercial Building Energy Consumption Survey. These new data provide yet another opportunity to test the EPA’s predictions for nine different kinds of of buildings. These new data will either validate the EPA’s regression models or confirm my earlier conclusion that they are invalid. Over the next year I will be extracting 2012 CBECS data to again test the nine ENERGY STAR benchmarking models based on CBECS data.

This week I performed the first of these tests for K-12 Schools. 539 records were extracted from the CBECS 2012 data for K-12 Schools representing 230,000 schools totalling 9.2 billion gsf. After filtering these records based on EPA criteria, 431 records remain, representing a total of 137,000 schools with 8.0 billion gsf.

I performed the EPA’s weighted regression for K-12 Schools on this final dataset and obtained result totally inconsistent with those obtained by the EPA using CBEC 2003 data. Only 3 of the 11 variables identified by the EPA as “significant predictors” of building Source EUI for K-12 Schools demonstrated statistical significance with the 2012 data. Numerous other comparisons confirmed that the EPA’s regression demonstrated no validity with this new dataset.

The EPA will no doubt suggest that their model was valid for the 2003 building stock, but not for the 2012 stock — because the stock has changed so much in the intervening 9 years! While this seems plausible, this explanation does not hold water. First, CBECS 2012 data do not suggest significant change in either the size or energy use of the K-12 School stock. Moreover, this explanation cannot also explain why the EPA regression was not valid for the 1999 building stock — unless the EPA is to suggest that the stock changes so much in just 4 years to render the regression invalid. And if that is the EPA position — then why would they even attempt to roll out new ENERGY STAR regression models for K-12 Schools based on 2012 CBECS data more than 4 years after these data were valid? You can’t have it both ways. Either the stock changes rather slowly and a 4 year delay is not important or this benchmarking methodology is doomed to be irrelevant from the start.

The more plausible explanation — supported by my study — is that the EPA’s regression is simply based on insufficient data and is not valid — even for the 2003 building stock. I suggest a regression on a second, equivalent sample from the 2003 stock would yield results that differ from the EPA”s origina regression. The EPA’s ENERGY STAR scores have not more validity than sugar pills.